By Maria Moloney

For much of the GDPR era, organisations have maintained compliance through documentation. They draft policies, publish privacy notices, conduct DPIAs, maintain RoPAs, deliver training, and prepare incident response plans. All of this is necessary, and the law requires it. In practice, however, GDPR accountability has often relied on a paper-based model. If you can show that the right governance artefacts are in place, regulators usually treat you as compliant unless something goes wrong.

That model is now under pressure, and AI governance explains why.

AI systems, especially those that process personal data or operate autonomously, do not fit a static compliance model. These systems are dynamic and adaptive. Their training data changes. Teams retrain models. Outputs drift. Integrations expand, and risk profiles shift over time. In that environment, static documents and one-off assessments no longer reflect real-world compliance.

Regulatory thinking already reflects this shift. Under the GDPR, organisations must implement appropriate technical and organisational measures and prove that those measures work in practice. Under the EU AI Act, this operational logic becomes even clearer. High-risk AI systems cannot rely on abstract principles alone. Organisations must embed them in a layered governance architecture that combines continuous risk management, data governance controls, traceable logging, defined human oversight, and safeguards for robustness and reliability. These obligations assess how systems perform in production, not how policies describe them.

As a result, the quality of your evidence, not the quality of your policies, will determine AI compliance.

In an AI-related investigation, regulators are unlikely to start by asking whether you had an AI ethics policy. They will ask whether you can show how you identified and monitored risks over time, how you selected and implemented safeguards, and how you logged and reviewed outputs. They will want to see how you detect drift or bias and how you escalate incidents. They will also expect logs, version histories, access records, training-data provenance, model-change records, and incident timelines. Operational evidence, not governance narrative, will decide the outcome.

Many organisations are not yet prepared for this. AI governance still sits in the safe, familiar compliance comfort zone. Legal teams draft principles. Compliance teams run DPIAs at project inception. Ethics committees meet periodically, while technical teams build and deploy models. These functions matter, but they do not automatically create a continuous, auditable evidence layer that shows how AI systems behave in the real world.

The uncomfortable reality is that most organisations cannot systematically show how their training data changed over time, which safeguards were applied to which model versions, how error rates evolved, or how often humans intervened. Yet these are exactly the questions that emerging regulatory regimes will ask.

We are entering an evidence-based phase of AI compliance, much like the shift financial regulation underwent after the 2008 global financial crisis. It is no longer enough to say that you manage risk appropriately. You must show, with traceable proof, how you manage risk over time and day by day.

This shift carries architectural implications. The move to evidence-based compliance is not just a policy change; it requires organisations to redesign how they build, connect, and govern systems.

In other words, accountability is now a system design issue, not a documentation issue.

AI governance cannot sit only within legal or compliance functions because the decisive evidence sits in technical systems: logs, monitoring dashboards, model registries, access controls, version control systems, and incident management platforms. If these layers do not connect to governance oversight, organisations cannot demonstrate accountability in any meaningful way. Nor can they retrofit accountability after deployment. If teams do not design systems from the outset to generate audit-quality logs and traceable control mappings, the evidence will not exist when they need it.

The traditional separation between data protection compliance, cybersecurity compliance, and AI ethics is also becoming untenable. GDPR, NIS2, and the EU AI Act increasingly focus on operational risk management, technical safeguards, logging, and incident response. All three now examine what actually happens inside systems rather than what a policy promises.

Figure 1: Joined-up AI governance

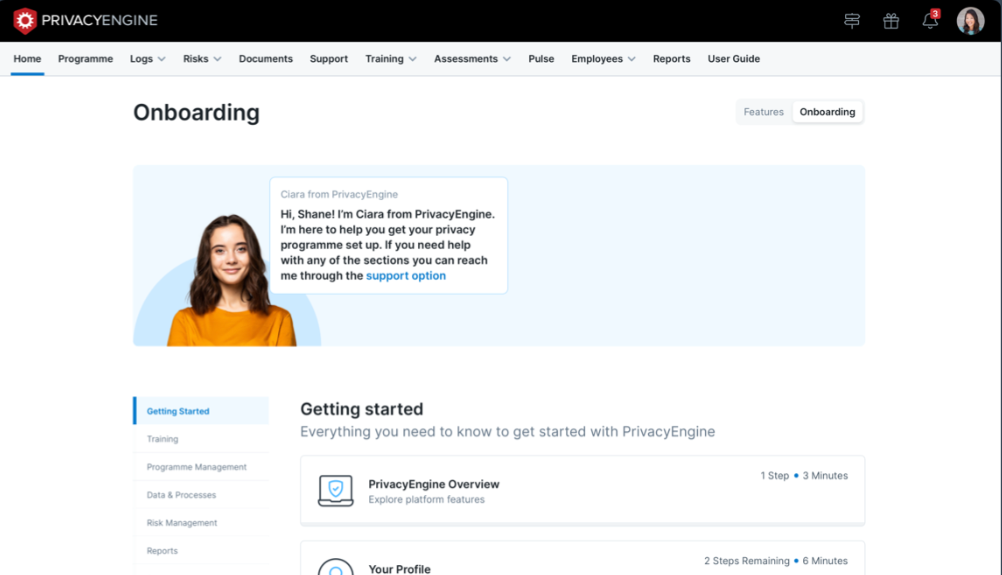

Figure 1 shows that when organisations remove the divide between siloed compliance regimes, they create convergence and integration across operational layers. Data security and monitoring capabilities such as Data Security Posture Management (DSPM), Data Loss Prevention (DLP), and Data Protection and Remediation (DPR) tools, including those from organisations such as Forcepoint, provide visibility into how data moves, where it sits, and how users access it. Governance platforms that support RoPA management, DPIAs and TIAs, data subject rights management, breach management, vendor logging and assessments, structured risk management, retention controls, and training oversight, including solutions such as PrivacyEngine, map regulatory obligations to documented controls and governance decisions. When these layers work together, they create a traceable evidence chain from regulatory obligation to technical control, from control to system behaviour, and from behaviour to documented oversight.

This is where the compliance battleground is shifting. It is not about the elegance of policies or the strength of stated principles. It is about whether an organisation can trace an obligation to a real, verifiable safeguard, and then trace that safeguard to real, verifiable operational behaviour.

Spreadsheets and static documents cannot support that model. They cannot link DPIAs to live controls, incidents to model changes, or regulatory requirements to system logs in a structured, auditable way. Boards and regulators will increasingly expect coherent, near-real-time visibility into AI risk posture.

If GDPR taught organisations that data protection is a legal obligation, AI governance is teaching them that accountability is an architectural one. Policies still matter. Principles still matter. Ethics still matter. But none of them can make up for the lack of evidence showing how AI systems actually behave and how organisations actually control their risks.

Over the next decade, intentions and documentation will not determine AI compliance.

Evidence will.